The current smartphone scene is pretty boring. Everything out there is just another rectangular touch screen with Android or iOS on it. There is practically zero innovation in terms of form factors and hardly any in terms of hardware features. Well, there’s screen notches, but that’s not genuinely useful. Okay, the Huawei P20 Pro’s new camera is interesting, but still smartphones have been basically the same for about 10 years. The smartphone market is ripe for disruption!

So, what’s next? Are augmented reality visor goggles going to be the next big thing even though Google Glass already tried and failed at that? Smart watches certainly haven’t caught on as anything other than an optional accessory. Although that may still be an emerging market, smartwatches are still dumb.

Smart speakers are kind of popular though. Amazon just announced a whole slew of products driven by their “Alexa” speech interface. They even made a microwave with Alexa installed! What if we had phones that were entirely driven by an intelligent conversational user interface?

Actually, we’re starting to see some new phones trend in that direction. The JioPhone sold in India is a very small inexpensive phone running KaiOS with a big speech UI microphone button. The microphone button launches Google assistant and is limited to functions supported by that, but there’s no reason a speech UI couldn’t be made to support interacting with an entire ecosystem in the future. That’s one of the big advantages to my ideas for standardizing on a consistent front-end human-computer-interaction interaction structure. We did it for the World Wide Web, (and roads you drive cars on), why not do it for all of our interaction systems of the future?

Advantages

A full speech/conversational UI device could have many advantages if done well.

- Simple, consistent, and intuitive interface. If there’s one thing our current smartphone ecosystems are not capable of right now, it’s consistency. Graphical user interfaces are all over the place in terms of usability. Many use ambiguous icons that take significant amounts of cognitive energy to understand. Many others also use hidden gestures that are completely un-discoverable to normal users.

- Increased usability and cognitive ease. Due to the increased consistency of a conversational UI, a CUI smartphone would be much easier for people to learn and use. It would basically be mimicking the user interface that we already use with each other as humans. Whether it’s talking to each other with audible speech or typing words using our native language, this is how all humans communicate. If you walk into my office, I don’t hand you a bunch of cryptic icons for you to press and see what happens. I’ll actually speak to you.

- Less expensive! You won’t need a big expensive touch screen or any screen at all. Most functions could be taken care of on the cloud/server side so you won’t need a huge amount of processing power, memory, or storage.

- Battery life. Without the need for a large display or any display at all, battery life could be vastly improved.

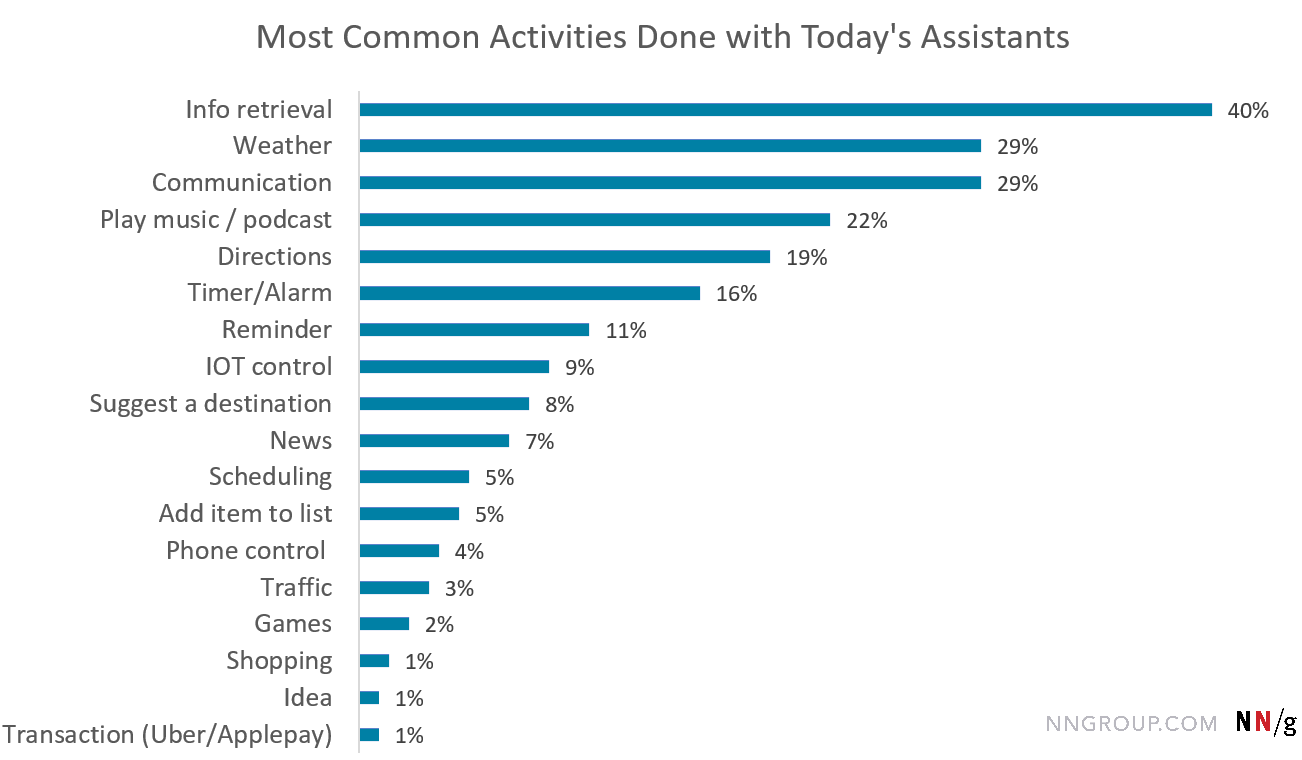

- Speech UI’s are becoming increasingly popular. 46% of U.S. adults use speech interfaces.

- Be part of the world. With a speech interface, you can be part of the real world without constantly looking down at a rectangular screen in your hand and poking at it to respond to each notification. Instead, you’ll be notified in your ear and be able to respond via speech just like a normal human being. This would probably make operating motor vehicles on the streets safer as well, since a good speech interface is going to let you keep your eyes on the road.

Disadvantages

- Speech interfaces are currently only good at very limited simple queries and commands.

- Artificial Intelligence isn’t intelligent enough to learn from individual users yet.

- Without a giant screen, you won’t want to watch movies while driving.

- Current speech UI’s have very poor usability.

- Other people nearby will be able to hear your speech interface commands.

Many of those disadvantages can easily be overcome. For example, solving the problem of eavesdroppers hearing what your commands are can be done by having a privacy mode where the Speech UI only asks you yes/no questions. This is exactly what we already do while talking on the phone in a public space where you don’t want the conversation overheard. Another silent, hands-free, eyes-free user interface implementation could be to use jaw muscle movements to interact with in-ear audible prompts. A conversational UI doesn’t have to mean just speech, either. It could be used with text-based electronic messaging as well. Type your commands and answers into a messaging field and it would have the same effect as speaking. Or, just use revert to the legacy screen-based interaction methods we’ve been using for decades.

The lack of a giant screen in your pocket for watching movies and playing games issue can be overcome by having giant screens everywhere else. I’ve got them on my desk, in the living room, on the tablet in my bag, etc. and those are much better user-experiences than a 6 inch screen that fits in my pocket.

So what do you think? Would you use a smartphone that was focused on a conversational UI? How much are you using existing speech interfaces already? Tap “Discuss this Post” below and let us know in the comments.