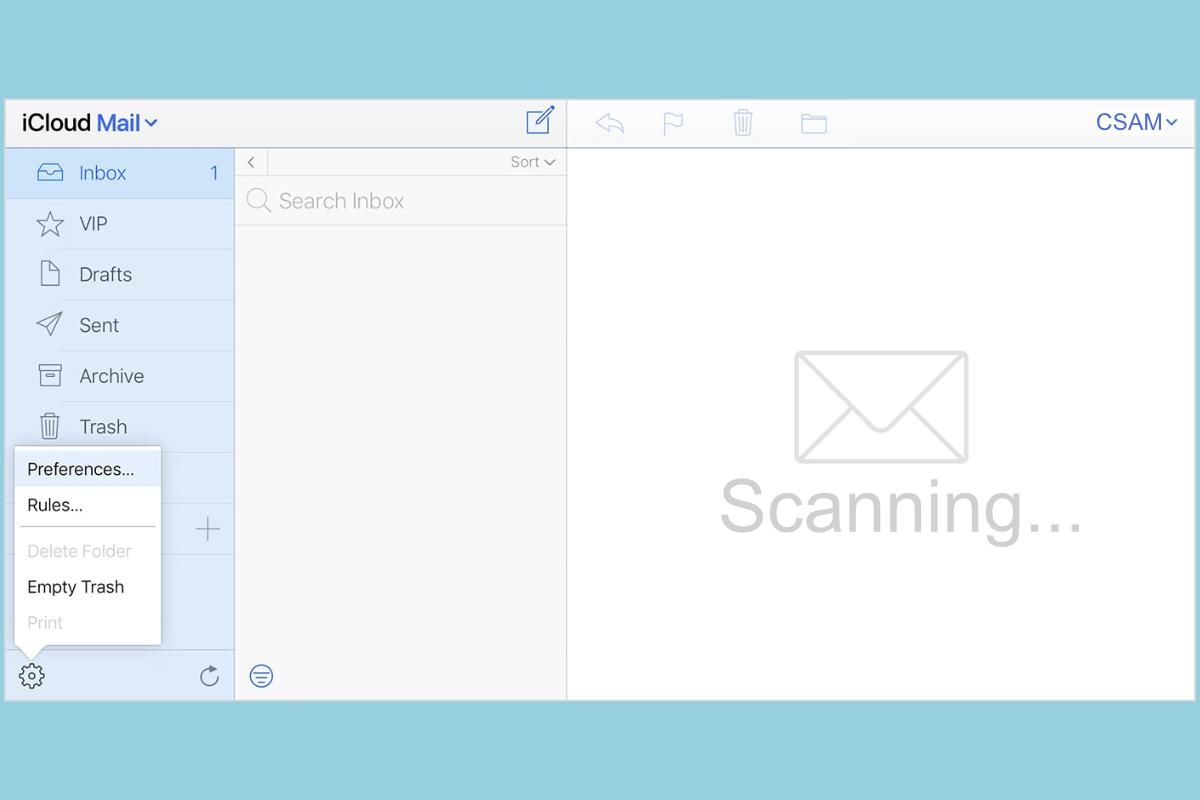

Apple has officially confirmed that it is scanning iCloud Mail for CSAM, and it has been doing it since 2019. However, Apple has not been scanning iCloud Photos or iCloud backups for such content, which may change in the near future.

Apple has confirmed the iCloud Mail scanning to 9to5Mac. The company has been doing this since 2019, and since the emails aren’t encrypted by default, attachments that are passed through Apple’s servers were not a gigantic challenge to look for. Apple also told 9to5Mac that it has been scanning for other data, but it declined to share for what exactly. It was suggested that it was data that is not considered as important or tiny bits of data.

A number of other clues suggested that Apple has been doing CSAM scanning for some time. 9to5Mac found an archived version of Apple’s child safety page that said the following:

“Apple is dedicated to protecting children throughout our ecosystem wherever our products are used, and we continue to support innovation in this space. We have developed robust protections at all levels of our software platform and throughout our supply chain. As part of this commitment, Apple uses image matching technology to help find and report child exploitation. Much like spam filters in email, our systems use electronic signatures to find suspected child exploitation. We validate each match with individual review. Accounts with child exploitation content violate our terms and conditions of service, and any accounts we find with this material will be disabled.”

Jane Horvath, Apple’s Chief Privacy Officer, said the same thing back in January 2020.

“Jane Horvath, Apple’s chief privacy officer, said at a tech conference that the company uses screening technology to look for the illegal images. The company says it disables accounts if Apple finds evidence of child exploitation material, although it does not specify how it discovers it.”

Even Apple’s own employees have called the company out over the scanning and new privacy changes. Other governments are concerned too, while Apple has mentioned that people “misunderstood” the feature. Scanning iCloud for CSAM content will likely only bring in more controversy, we’ll have to wait and see how Apple responds.